Recently I went through a lot of interviews with some fancy AI companies (Anthropic, Deepmind, Midjourney, Recraft, Black Forest Labs, Runway, Suno), also FAANG, also a lot of smaller startups. I didn’t necessarily progress far into the stages for all of them but got some offers in the end, e.g. from Perplexity.

This article is an attempt to summarize my observations and learnings - the questions* being asked, my mistakes on different stages, how to prepare and useful tips and resources. Basically the things I wish I knew before starting the process and which will be useful anyway in the future.

*The questions are sometimes slightly altered to not spoil the process of the companies.

Some more context:

- I was looking for ML Engineer / Research Engineer positions (Senior+). Mostly in GenAI (text/audio/images/video/3D/multimodal/etc) but occasionally other things slipped through

- I was only looking for positions in the UK or remote (and I already had a Global Talent visa so no sponsorship from employers was required)

- At the time of applying I had ~8 years of experience in Deep Learning and applications, including being Tech Lead at Snap and ~10 publications/patents in GenAI (which was somewhat weakened by the fact that I didn’t have experience of truly ‘large-scale’ training and in my last year I was bootstrapping a startup which was smaller scale&success than my previous job)

- I did not have any technical interviews in 5 years prior to this (I was an interviewer myself but that doesn’t count)

- All interviews happened in April-July 2025

I highly recommend having realistic expectations. While it’s possible to find some job in a month, it’s unlikely it will be the one you like.

I initially aimed to accept a good final offer within 2 months. In reality in the first 2 months I got exactly zero offers (not even for the companies I didn’t like too much I got anything). Towards the end of the 3rd month I had 3 offers though. And I was basically searching full-time. It is a mix of some companies moving through the stages very slowly, not-that-many positions actually being open and genuine failures on my side.

The interview process feels a lot harder now. Five years ago I got 3 offers out of 4 started processes, and now it was smth like 3/20 (counting only positions where I had 1+ interviews).

The realistic estimate to land a job now is probably 3-6 months.

Applying & getting the interviews

Before we go into interviewing it’s important how to get them. Spoiler: I don’t know the right answer, but can provide my own experience and statistics.

Finding where to apply

While the dream position might accidentally find you w/o your effort at all, if you are at the stage when you (prepare to) actively look for a job it’s probably wise to set the goals first.

My strategy was basically a simple CRM:

- I made a google sheet with ~50 companies which might have interesting positions. Just the names I already knew. If you are going to do the same - think which companies do you like in general and which of them might have the job you are looking for.

- In the sheet I rated 1-10 fit from my perspective and from the employer’s perspective and got my priority sorting. I also reached out to connections for the companies where I had them. And looked into the special spam folder of my email called ‘recruiters’ to see if there were any invites to the remaining ones. For the ones where I didn’t have anything - looked up how to apply/if there’s anything open (e.g. Mira’s Thinking Machines had positions closed at the time but Ilya’s SSI had a simple form which you can fill, so did Anthropic, midjourney had email, some other companies like Krea described their application process - all of which I moved to the table to not forget).

- Note: the reality (in my case at least) is that you will have very few companies where scores from both yours and employer’s perspective are high (mostly because you probably don’t want exactly the same job as you previously had)

- I put some action items & dates into the table for when to reach/ping/double-check if new positions opened/dates for scheduled interviews/etc

- (Later when recruiters reached out with new companies or I would notice an interesting one I just added rows to the table - ended up with ~60 entries total in the end)

Resume/CV

After it was more or less clear, which positions companies have, what their requirements are and so on I worked on my CV. Since I haven’t really updated it for nearly 5 years (some updates for visa-related things only but that’s different) it was quite a task.

I first tried to squeeze my 8 years of experience into a one-pager as my memory told me that’s the right format, but - LLMs helping or not - it really didn’t look good (too much info missing). As it turns out it’s recommended to do 2-page CV if you have 5+ years of experience so once again I reworked my resume. It ended up looking nice though to the extent that one of my friends said it was a “perfect resume” lol.

I sent the very same resume to all companies. Maybe creating slightly different versions depending on company/job specifics might have been better but I decided not to bother too much. (As an example of what could have been done - I applied to both very early-stage startups and big tech, for the former some full-stack experience is more important to highlight while for the latter some deep tech only)

One more note: I was somewhere between L5 and L6 (like L6 was theoretically possible with a lot of search and preparation, but L5 was much more realistic), but my resume was closer to L5. I think if I were to actually target L6 I should have done a different version of the resume and put ‘Staff’ into the self-proclaimed title. Without it I never got a reply from the staff roles I applied to (well companies have different strategies and your grade sometimes depends on your performance during interviews but usually your grade is in the position definition or pre-assigned by a recruiter)

Overall making the initial version of the table and updating the resume took 1 week of fulltime work.

Applying

Without question, if you have a connection in the company you are applying to - ask them to refer you. In the order of priority

- If you know the person well - ask to look if there are open but unannounced positions (e.g. the majority of good positions in OpenAI/Deepmind/etc are like these).

- If no such positions known or if you don’t know the person well enough - send your top3 positions from the company site + cv. It saves some explaining time for your referrer (was a bit annoying when I was referring people myself to explain that every time)

- If you don’t know anyone at the company - try to look at some referral tables in different communities (e.g. for Russian-speaking there’s one in London Yuppies tg channel)

- (That one you can do theoretically - I almost never did because I don’t like bothering people) Add recruiters of that company on linkedin and send your cv to them with a list of positions you are interested in.

Beware that your potential referrers may

- Not reply for some time (e.g. if you are only connected on linkedin - latest I got was ~1mo later, many people don’t check it often)

- Say yes but forget - so ping them after some time

- Not reply at all (esp if you only barely know them)

It is really important to have a referral if you can - many companies reject candidates applying without a reference w/o looking at all. (Good companies easily get 1000s of low-quality applications per position because of agents monitoring linkedin/etc).

That being said I applied (among others) to Anthropic, Deepmind, Runway, Black Forest Labs, Suno, Sony, Spotify w/o referrer and got interviews anyway (response time varying from 1 week to 3 months [Anthropic]). I might have secured the interviews with a referrer much faster though (and not only that - I suspect on the recruiter screen I’d be more likely to pass for e.g. Runway)

Finally, I’d recommend applying to and having interviews with companies where your match/desire to work there is weaker so that you can get some practice before trying for your dream job. It doesn’t mean you should apply to positions which are completely irrelevant / the ones where you would never work (or continue the process once you realised that) - I’d suggest having a basic decency here.

And if you are hesitating whether you should apply / ask someone for a referral - always do. You lose nothing from trying. In my case I was skeptical if Midjourney would reply to an open-ended email (the only specified way to reach them - they don’t have official positions), yet they did and I got the interview scheduled in just 1 week.

Interviews ontology

Alright, let’s say you are finally over with applying and got some invites to the interviews. In the following sections I would go over the interviews based on type but here just to briefly mention them:

- Non-technical

- Recruiter screen. Apparently it is NOT a formality (used to be imo), ~26% (6/23) started processes failed here for me.

- Vibe checks (HM / Founder / Senior leader)

- Behavioral

- Coding

- Leetcode

- ML coding

- AI-assisted coding

- ML

- ML depth/breadth

- ML design

- Other (less frequent)

- Homework

- Math / ML basics

- Pre-screen (coding w/o human)

- System design

- Data engineering

- Other+ (heard about but didn’t have)

- Present/discuss a paper (yours or given. didn’t have it but was briefed it will appear further in the process twice)

- ML debugging (you see the code and tell/fix everything you can notice is wrong with it)

Above are ‘single-topic interview in a vacuum’ kind of types, in practice all of them are mixed during actual interviews in different proportions. In particular the first technical interview (technical screen / phone interview) is usually the worst in terms of predictability & preparation because you can literally be asked anything.

The exact flows and amounts of interviews are different depending on the role/company, the classic FAANG flow of recruiter->technical screen->onsite->bar-raiser was almost never followed in my experience (onsite broken into a few shorter stages of 1-2 interviews)

Some statistics:

My progress by the last stage

Last stage:

- (3) Offer: Perplexity (search for images), Spaitial.ai (diffusion for 3d generation), Unreal Labs (AI for advertising)

- (4) All interviews but no offer: Deepmind (LLMs for science), Recraft, Meta, Spotify

- (1) Didn’t finish in time: Canva (had final interview remaining after onsite but already accepted another offer)

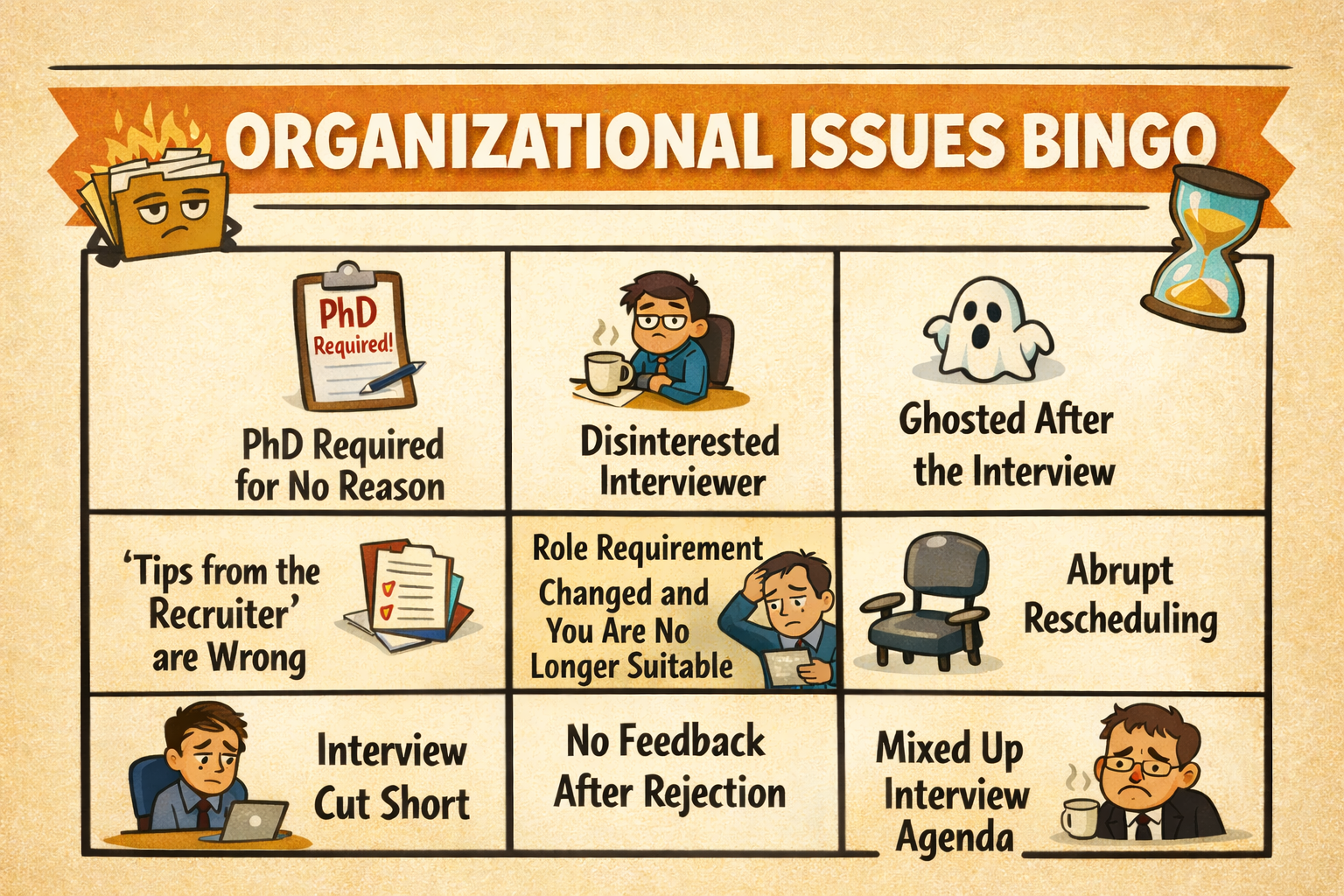

- (2) Position closed/changed in the middle of the interviews: Moonvalley, Sony

- (2) Hired another candidate faster while I was in the middle of the interviews: Synthesia, Lumina

- (3) Technical screen: Amazon, Anthropic (passed the pre-screen but failed on the actual technical screen), Wayve

- (4) Recruiter screen: Midjourney, Runway, Suno, LeonardoAI

- (4) Rejected on my side after/during the first call: Black Forest Labs (no positions with matching interests), Lightricks (no positions with matching interests), GenPeach (product doesn’t resonate with me), Kittl (passed first technical and got homework but figured I didn’t want this position - backend-heavy)

- (12+5) No reply / reject by cv (by default - UK position, with referral): Apple, Microsoft Research, Google (no relevant positions for L5, rejected for L6 by cv 3/3), Nvidia (US/UK), Adobe (US/UK), Tiktok (US/UK), Waymo, Elevenlabs (no ref), JetBrains, Mistral (no ref), TogetherAI, Mozilla (no ref), Luma Labs (US, no ref), Pika (US, no ref), Disney (US, no ref), SSI (US/Israel, no ref), HuggingFace (no ref)

Interviews per company

- (2) Anthropic (pre-screen, screen)

- (1) Amazon (screen)

- (1) Wayve (screen)

- (3) Synthesia (discussion + homework + discussion)

- (2) Moonvalley (ml design + ml coding)

- (2) Sony (vibe check + discussion/vibe check)

- (5) Canva (ai coding + behave + ml design + reverse ml design + paired coding)

- (6) Deepmind (screen/dl basics + coding + ml depth + ml design + 2 discussion/vibe check)

- (4) Recraft (vibe check + ml + coding + experience)

- (5) Meta (screen + 2 leetcode + 1 behaviour + 1 ML design)

- (6) Spotify (screen + 1 behave + 2 ML design + 1 data + 1 system design)

- (6) Perplexity (vibe check + ai coding + ml coding + ml design + reverse sell + vibe check)

- (6) Spaitial (vibe check + homework + 2 screens + 1 vibe check + 1 vibe check)

- total ~50 including

- Vibe check / HM screen: 10+

- ML Design: 8

- Leetcode / coding: 8

- AI-assisted coding: 2

- ML Depth/Breadth: 3

- Behavioural: 3

- ML Coding: 3

- Homework: 2 (+1 which I didn’t do)

- Pre-screen: 1

- System design: 1

- notes:

- usually the interviews were more than 1 type so I put the most relevant one as a label. discussion/screen is +- everything, sometimes inclined towards vibe check.

- recruiter/first calls usually not mentioned. some companies with bad match / just 1 interview also often not mentioned^

- order of the interviews per company is listed as they happened

- note that the counts are for the main topic of the interview, e.g. ML Depth / Breadth or Behavioural were in fact very common but as a subsection/part of the interview. simplified ML Design was also often part of technical screen

Meta-tricks / advice

I didn’t have doubts I could have passed any interview in principle, given sufficient preparation and knowing what to prepare for. Now that last part of ‘knowing what to prepare for’ is tricky - of course you can find smth useful before starting with interviews but it’s unlikely you’d find exact or close questions to the position you are looking for at a company you are looking for by just browsing. I realised that the best source of such information would be the actual interviews (there are many companies, the question would somewhat intersect, and even if not - formats would, my mistakes would, etc).

Two specific pieces of advice:

Record the interviews (both technical and not)

How to extract the maximum of any interview for the future? Reflect on them - yes. But human memory isn’t perfectly reliable so I had a bright idea to record the interviews. I think before coming to this idea I read 95th percentile post by Dan Luu which can be teasered by “you can get to 95th percentile in +- anything as long as you notice and stop doing stupid mistakes”. In particular my idea of recording was inspired by how Michael Malis watched himself code.

Since many of the interviews require you to share a screen I thought for a short time for some sophisticated setup but settled on a simple ‘record audio with a phone’ which is very easy and mostly good enough for my purposes (audio alone was likely 90/10 sufficient - all question & answers & timing/exact way you replied are there. recording my face & screen would likely be a bit more beneficial - e.g. I got some feedback that I looked disinterested once and I guess watching myself code might have given slightly more insight).

I originally recorded only the technical interviews because I thought non-technical ones were just a formality and what to learn there? I was wrong here (got rejected on these a few times) and so started to record them as well. Recording interviews with the recruiter has an extra benefit of saving some process / prep information if it was shared with you (rarely accurate but better than nothing).

I walk daily for some time and it was convenient to replay the interviews (in normal speed so you have the same experience as an interviewer) while walking. Listening helps in a few ways - you understand the questions better (I caught myself sometimes misinterpreting the original questions), you see how good your replies sound (in my first recorded interview I realized how badly I was speaking - the extra words such as ‘like’ had ~1/10 frequency of the entire narrative) and find some factual mistakes of course. Knowing the exact formulations helps you search / chat with llm on the topic. Sometimes it becomes clear the interviewer wanted a different thing (e.g. more thoughtful and long reply on a design question during a technical screen instead of hand-waving).

Ask backwards

There are some textbook questions with known answers (even if you have somehow forgotten them). But many problems of the real world don’t have a known solution and people approach them differently (e.g. how do you do evals on some complex domains, or some subproblems with many possible solution paths - which tends to work best?).

What I discovered is that you can ask the interviewer backwards on these (after you run out of ideas) to share their experience on the problem. And you don’t have to wait till the end of the interview here (and that many interviewers would be happy to share something). And the reply you would get back might be actually novel / helpful to you.

For example, I was asked how to save compute in diffusion models for image/video via making them work on lower resolution for noisier steps (so needed to up/down sample the latent and the question is how). And apparently the approach which used to work the best was to go back to image space with VAE, resize the image, go to latent space again.

So I recommend doing that when the situation calls for it.

Non-technical interviews

Previously I treated all non-technical conversations as a formality and didn’t pay much attention. This time however I learned that it is actually an important step as well, unless you are clearly a very good match for a position (though I have an exception even here) the initial talking has even more weight.

In numbers, out of 23 started processes 6 (26%) failed because of my not-caring-much attitude towards that part (I guess numbers might have been worse if I hadn’t noticed it somewhere in the middle). The companies where I failed like this often were more interesting than the others as well.

The usual interviews here are

- Recruiter screen

- Hiring Manager screen/interview (usually first or last)

- Founder/Senior Leader vibe check

On the ones above you are mostly exchanging introductions + asking more questions about each other (both you and employer trying to figure out if you fit the position or not). Also multiple positions beyond the one you originally applied to might be open in a company, and a recruiter can notice if you are a good fit to them (you can also ask directly - likely the best option). Recruiters in bigtech verify/pre-assign your level as well (if the position you are interviewing for is not a fixed level. also sometimes the level is determined through the interviews though interviewers might be biased by the initial estimate anyway).

The less frequent ones

- Behavioral (as a separate interview or as an extra question on all or some interviews)

- Reverse vibe check (you ask questions about a team/company)

- Experience interview (had such an interview at Recraft - you basically retell your entire career history in 1h - all projects you ever worked on, what exactly were you responsible for, interesting technical moments, etc in as much detail as you can while the interviewer would ask more questions on what they are interested in)

Also the vibe check is continuous and every interview contributes to some extent, including the technical ones.

prep

Basically you need to (1)remember all/any relevant experience and (2)be able to present it properly. Also avoid style/mood mistakes (more on that later).

Intro

After a few initial interviews I felt like my introductions weren’t telling what I wanted so I spent a couple of hours distilling a 2-min intro I used from that moment. I remember reading this article on the topic though the main point I took - since you’d have many interviews ahead it’s ok to spend a few hours on an intro which you’d have to say on every single one of them basically. My structure wasn’t like in the article but I was satisfied with the end result. It contained the answers for what I did before, why I stopped my previous work, what I was looking for and why I was a good candidate for them.

My intro for ref (as found in the notes)

I have around 8 years of experience working in Deep Learning and its applications.

I started from ABBYY, it’s a document processing company where I had a 50/50 split between academic research and production-oriented prototyping. It was mostly Computer Vision with some blend of NLP - detection, segmentation, OCR, early language models.

At Snapchat I worked with filters. The user would open the camera on their phone and now they would look like a cartoon character or a younger version of themselves or whatever we want. And it would work in real-time on the user’s device. When I joined Snap it took over 6 months for engineers to develop 1 such effect and when I left I led the team responsible for this direction. We completely automated this process and the user would only need to send a text prompt or image reference to get the desired effect. In terms of technology it was GANs, Diffusion, LLMs and also working with data a lot.

When I realised that while I really liked the technology and had a very strong team I was not passionate about the product of Snapchat. So I decided to leave and build my own company in the domain of Generative Video.

I had a number of projects during the last year. For example, to enable long video generation you need the character to always look the same on all shots. So I trained a custom adapter which would accept one reference image of a character and generate the same character in a different context. I also built end-to-end systems, for example, for music video generation - user would upload an audio file and the system would analyse it, make a storyboard, create keyframes, animate them with image2video. I had a number of such projects but ultimately didn’t figure out how to build something big and now I’m looking for something with more impact.

Note that many interviews are short or do not expect you to do a detailed intro so I often matched the interviewer’s introduction in length where it was appropriate (most coding-only interviews for example would be just 1-2 sentences). On the technical sections the interviewers might not even ask or forget to ask for an introduction (in which case I usually asked if they want me to introduce myself as well and gave a similar short intro).

Remember what you did before

Anecdote I (stress 15min interview with famous researcher). It was the initial interview. There were no introductions. The interviewer was late a few minutes and in a somewhat annoyed intonation started with ‘ok tell me of the most complex technical thing you did’ and when I loosely explained on a high level he pressed ‘I want to know everything, architecture/losses/data/etc to the extent it can be reproduced’. Moving aside the fact that you often can’t tell quite everything because of NDAs the truth was that I didn’t actually remember the full details of the project (not at whim for a clear explanation at least) because for the last year my projects were somewhat smaller in scale and of somewhat different focus. I did manage to explain it satisfactorily and overall passed but you get the point.

After this I remembered my 3 last years in more detail.

Anecdote II (technical interview with Sony). The interview was structured as a discussion on a specific topic (efficient architectures) with two engineers. At some point one of the interviewers asked me about my papers. Not just what I had or tell what it was about / the main results. No she read the papers before the interview and asked specific questions. The thing is one of the papers I only superficially participated in and another was written in 2019 so naturally I only roughly remembered what were in both. So we had a funny and humbling moment of the interviewer saying “Ok, let me remind you what was in your paper”.

I don’t remember learning from this lesson and actually reading my papers after that :) It wasn’t ever asked on any other interviews later but I probably should have done it. Anyway, you know such things can happen now so be prepared.

questions to interviewers

It might be a good idea to have some kind of checklist for end-of-interview questions (I didn’t have it or maybe only implicitly).

The ones best applicable are situational from the particular conversation you had but I used these heavily:

- “What is one thing you like the most about [company/team/your role] and one thing that you don’t like the most?” - my default question, usually very telling (even if people evade it - a signal upon itself). Also different interviewers in the same company would have different answers (sometimes they would align very well though, e.g. everyone I asked in Spotify said ‘team/people they work with are amazing’)

- “How much compute/GPUs do you have for training?”

- “Do you have any restrictions on using AI tools in your work (e.g. claude/cursor/etc)”? nearly everyone I asked said they use everything w/o restrictions as company policy. but you never know

- “Why do you believe [company name] can work? What are your moats?” - for founder/HM/recruiter in startups

- “What is your strategy & product?” - for founders. You’d be surprised how many can’t answer that question convincingly

- “What are the biggest challenges now and what do you expect to happen in the company in the next 6 months?”

Asked a few times but didn’t get much:

- “What’s one thing you recently learned at work (say, in 1 month)?”

- “Regarding our previous discussion - can you provide some feedback - what is one thing which stood out the most / what you would do differently from what I said?” - that one only after a technical interview where you think you did well and don’t know where you might have missed smth. I got some replies but mostly opinionated, didn’t ever feel any revelation out of it. I thought it’s a ‘genius idea’ to ask for feedback directly at the end of the interview since recruiters nearly never provide feedback (or even if they do it’s very generic). But in reality you can usually tell yourself where and how you did suboptimally (esp if you recorded the interview and chatted with AI afterwards) and the interviewer can’t provide their completely honest and detailed replies anyway

Specific mistakes

Location

Most interesting ML positions are usually in the US, the common knowledge though is there’s not much point applying to them if you don’t have a Green Card (most employers will just discard you at that moment) or at least a work visa (some employers may consider you). I didn’t have a US work visa but sometimes applied if there were no other positions in that company. Not even once I passed the resume checks stage from these.

Location (Suno AI). Suno had most of the positions in the US as well but I noticed a position in Cambridge. I thought that Suno is interesting enough to maybe consider moving into Cambridge (also Cambridge is nice). So I applied and after some time was on the call with a recruiter. Where it would turn out that the location was actually Cambridge, Massachusetts, not Cambridge, UK. I told that I could theoretically get O1 as it’s similar to UK Global Talent but as expected, I got a rejection letter a couple of weeks later.

Experience (relevance)

The recruiter / team lead screens would normally check you for relevant experience with varying degrees of depth. And often enough though not always (seems to depend on a company / interviewer much more than on you) I was ruled out because of not doing smth similar enough.

My most obvious lack of experience was in large scale training (did smth like 4x8 gpus but not more) and I was careful to not let it slip away without being pressed. Unfortunately some recruiters are deliberately checking these kinds of things: R: “What was the largest model you trained in billions of params?” A: “A few billion?” R: “No but that’s for finetuning you said, what about training from scratch?” A: “Hundreds of millions?”

While it does feel somewhat unfair (do you think I won’t be able to, really? what’s the difficulty? I did distributed training anyway and you have an infra team of sorts who set up things already) that’s the reality.

Some things would reveal themselves later even if you got past the initial checks. In deepmind (LLMs for Science) I felt I did pretty good on interviews overall (went through all stages). But eventually while the lack of experience in some relevant things was not a hard filter it seemed to be a soft one (would you rather pick someone who has done smth like your team does before or someone relatively new to it?). Multiple candidates are often interviewed in parallel so while you may pass all of the interviews you may still fail in comparison with other candidates who would also pass them.

Perplexity (counterexample). For a search team on the initial interview with TL I directly said smth like “Just to be clear, I don’t have much experience with search” and got “That’s quite alright, rarely we know everything” - and I got the offer eventually.

Experience (Laziness / Underselling)

The rough point is that if you want a position you shouldn’t downplay your knowledge or ‘be lazy’ in replies. If you’re asked smth try to answer even if you have superficial knowledge or it’s a recruiter question.

Laziness (Midjourney). During the first (and, sadly in my case, also last) call with Midjourney I was asked among other things ‘what is your opinion about text diffusion?’ to which I lazily replied that the topic is interesting but I’d like to read more first and not really have an opinion right now. I even got pushed again with the variation of that question immediately after but again kind of avoided it. I could have answered some obvious things, not that I didn’t know anything on the topic. I guess the reason it happened was I just didn’t try hard there and gave the most natural reply instead of the most useful one. (Note: there might be other reasons why the process didn’t move forward in my case of course but that was the most obvious ‘flaw’ in that conversation).

Underselling (Synthesia). I was considered for 2 positions initially: smth about inference optimisation & smth about quality improvement in avatars in both audio and video. The latter one was definitely more interesting (and more suitable as well) but I learned I was filtered out of this one early. I think the main reason why it happened was me underselling or just right downplaying my experience. For example I was asked about my experience in multimodality to which I mentioned a paper of 4 years ago telling that it was a very basic thing. I could have mentioned more relevant things but again, I was not thinking much about it - which didn’t play favorably in my case.

I guess the point of this section is that while you can give the most natural and easy reply w/o thinking - the questions, even from early interviews, are asked for a reason. As a candidate your goal is to sell yourself, which I don’t like the sound of and wouldn’t go to the extremes even knowing this explicitly now, but paying attention and giving more relevant replies still makes sense.

Demonstrated interest / motivation / skepticism

I had 2 processes where I was rejected not because of technical (or even soft) skills but because of ‘lack of motivation’.

You shall not bypass (Leonardo AI). I had a call with a recruiter. He told me there are many open positions to which I can fit (from my perspective I was nearly an ideal candidate and should have easily passed). But the tone of the discussion wasn’t good - the recruiter was visibly tired (which I even asked him about) and I was at the earlier stages of my overall interviewing so giving fast and lazy replies to ‘bypass this useless recruiter step’. And I guess it was somehow not acceptable (the recruiter didn’t even reply a week later when I asked about the status). While that might be an outlier, looking ‘visibly interested’ - asking questions about the company and such - is likely increasing your chances of passing recruiters.

Looking disinterested (Synthesia). I had a final interview scheduled but got a message that they accepted another candidate. Synthesia is the company with the most humane rejections - you get detailed feedback and may schedule a call with a recruiter to learn more. So during that call I learned I was not accepted because of ‘lack of motivation’. The recruiter probably made up the answer when I asked what it means specifically. In his words - I didn’t look passionate enough during the interviews in the way I was talking. I don’t know but it might be that scheduling interviews in 1-2 weeks every time was also perceived as ‘lack of motivation’ (or even if it wasn’t - you give other candidates ability to finish the process earlier - which happened in my case).

On a neutral side I learned that ‘submitting homework on the last day’ is not a sign of bad motivation (at least I got an offer with one and it was explicitly denied that it made any impact on the decision in the other case). I guess it may still play favorably to you if you do things early but it doesn’t look like submitting close to deadline is considered a bad sign.

Too direct questions (Runway & others). Sometimes I just feel like asking something even if it’s a bit of a difficult or silly question. Examples would be:

- “Why your models are always a step behind state-of-the-art and what is your strategy here?” (To Runway recruiter)

- “All people I know from [company name] left it and usually not for a good reason - how would you comment on the situation in the company now?”

I feel like these are fair questions but they might signal your skepticism about the company so if you really want to get there maybe a smarter strategy would be to ask them in the final interviews (or not at all of course). At the same time if you don’t get to the end it might be your only chance to get an answer (though in my experience you wouldn’t get an insightful one to the questions like that). I don’t have direct confirmations this was affecting the discussions negatively but I guess easily so.

Mismatches

Sometimes I got an interview with a recruiter or HM but the expectations wouldn’t match.

The simple case is that you didn’t like the position / company. Happens with startups sometimes since you are often in the dark about what the company is doing or even the company name.

The more annoying case is when you like some position which you know is open but the company/recruiter only considers you for a position you don’t want to go to. I honestly don’t know what to do here / if it is fixable at all. Maybe that’s the case when the only thing which could move you further is good connections (random speculation).

Examples (no observations or ideas from me here just to show how it happens):

Lightricks (LTX Studio)

They had 2 independent parts of the business - the face filters app which is the moneymaker and the LTX studio (they do research / make their own video generation model) which is cool but more of an experiment / charity to them. I got an interview with the HM of the team in face filters (I didn’t apply there) w/o being told the position upfront - on the interview itself I said I wasn’t interested in doing the same thing as before and looking for the other things with video generation. The conversation was overall going well from my perspective in terms of the atmosphere. I think HM said she’d pass my resume to that team but I got no reply from them in the end.

Black Forest Labs

I had an interview with a recruiter where among other things he asked “what are you passionate about [in context of positions we have]?” and I told smth about “data” (meaning preparing data for training). I am actually passionate about training even more but I haven’t seen any positions where they’d consider me for these, but there was a position for smth like working with data for training. A bit after the interview I got invited to the next one, position was ‘ML Engineer (Moderation)’ which was… data but not the kind of I was interested in. I got back to the recruiter with - “hey but what about this data position?” -> “no we’re already late in a process with another candidate for this one” -> “ok what about this one” -> “no that’s early career only”. So this is how it ended.

Behavioral interview

Answer a set of questions in the STAR format each.

Example questions:

- Describe a time you had to prioritize between multiple competing urgent demands. How did you decide and what was the outcome?

- What is the biggest achievement in your previous projects?

- Tell me about a time when you had a conflict with a colleague

- Tell me about a time you mentored or helped grow someone on your team

Questions from actual interviews

-

Tell me about a time when you had a significant unexpected obstacle in achieving a goal

-

Give an example of when you had to solve a situation autonomously without guidelines

-

Tell me about a time when you not only met a goal but you considerably exceeded expectations

-

Tell me about a time when you welcomed the change of existing work practices (coming not from you)

-

Describe a professional situation where you benefited from someone challenging your idea directly. How did you respond, and what did you learn?

-

Tell me about a complex initiative you led to delivery. What was the problem and the biggest challenges you were trying to overcome to make it successful?

-

In this position, you may need to handle multiple projects with tight deadlines. Describe a time you managed conflicting priorities. What approach did you take to make sure everything was completed on time?

-

Tell me about a time where you haven’t completed a project because the world around was constantly changing?

-

Tell me about a time where you didn’t achieve the goals you set to do and what did you do about this situation?

-

Tell me about the work or any achievement you are most proud of

-

What is a project or task you completed, that everyone around you thought was impossible?

-

Describe the way you collaborate with non-technical stakeholders

-

Tell me about a time when you had to disagree with your product manager or designer and how did you resolve this

-

Tell me about a time when you had to make sure stakeholders across the business understood a critical technical decision or investment

-

Describe a time when you had to foster a harmonious and collaborative atmosphere among a team of people with different interests and priorities. How did you bring people together to achieve a common goal? What was the result?

-

How do you decide how much time is needed to do a specific feature/improvement/request?

-

Tell me about the most difficult working relationship you had

-

Tell me about a time where you worked in a group where some team members were difficult to get along with

-

Tell me about a time when you had to resolve a high pressure and high conflict scenario

-

Tell me about the time when you had a difficult conversation and needed to provide feedback to a colleague. How did you approach it and what considerations you have given to their personal feelings? Why did you choose this approach? How did they react?

-

How do you make sure you are not a low performer? (lol)

-

Share an example of feedback provided to you (about your performance) and how did you work on it

-

Give an example of valuable constructive feedback given to you and how did it change your behaviour. How did you receive the feedback?

-

What key goals (in work) did you set for yourself during the last year? Do you measure performance / how successful you were in achieving these?

-

What do you see as an advantage of being part of diverse teams? How have you actively leveraged the differences within your teams?

I had 3 such interviews as a separate step + some questions coming up in other interviews (mostly on technical screening, rarely on recruiter as well). I passed them ok (either moved to next stage or got neutral/positive feedback on that part).

On the interview itself I might have asked in the beginning ‘how many questions / how long should I reply for each’ but usually it’s a conversation and the interviewer might want to talk more about something depending on your answers or move forward with new questions. Telling a complete story in the right format in a not unreasonable amount of time (likely <5 mins) should be good enough.

As a minor advice - sometimes you would not have an example for the exact formulation but might have a close enough one (e.g. I was asked how I disagreed with the product manager or designer to which I said such cases were rare and trivial but I guess I can share how I disagreed with my director? Which was good enough)

prep

My preparation here came from 2 somewhat accidentally discovered / somewhat obvious facts:

- Your stories should be matching the level you are interviewing in scope (inspired by some earlier version of this article)

- Middle - anything beyond just you

- Senior - at least your entire team

- Staff+ - at least multiple teams/entire org

- You are usually asked to demonstrate a certain quality (leadership/initiative, resilience, teamwork, conflict resolution, etc) - from a random but good youtube video (first 10mins are likely sufficient)

- so you are effectively asked to demonstrate some of these but in a roundabout way (unlikely you’d be asked ‘tell me how you showed leadership’ but you may be asked smth like ‘how you resolved conflict in a team’ or ‘how you pushed a project to completion despite obstacles’ etc)

- many actual questions would be asking for more than 1 quality at a time (“Tell me about a time you had to deliver a project on time despite a team member not pulling their weight or a major unexpected blocker” — leadership + resilience + conflict resolution + teamwork in one story)

So a combined preparation approach was

- List every experience/project/etc relevant in the last 3 years (my self-prompt was to look for: reusable model/technology improvement, shared infra improvement, process improvement, launches, patent/paper, collaboration, mentoring + any conflicts)

- Pick the right seniority level by impact (filter out lower level ones)

- Self-prompt for demonstrated qualities through these experiences (where’s leadership here? teamwork? etc)

- At the end for each quality/question type I’d have ~1-3 strong examples. So with just a few stories it was sufficient to cover the vast majority of the questions.

Once the structure and the way to get answers was clear I didn’t spend much time practicing (a few hours total maybe?). Practice was just looking at a random behavioural question, mentally sorting it out to demonstrated properties, telling my story with the respective properties highlighted in smth close to the STAR format

forms

Some companies ask you open-ended questions similar to behavioural ones in a form (either when you first apply to a position or they may send the form later instead of the actual behavioral interview). In particular, Anthropic, SSI, Mistral, Recraft.

Questions are mostly published and openly available and you have infinite time to reply. I put them here as both demonstration and something potentially useful to think about when you are choosing your next role.

Example questions outside of the usual STAR story format

- Imagine you have an urgent task, and you need to coordinate with multiple team members quickly. Describe your ideal approach to getting this done efficiently.

- What motivates you to take on a new challenge at work, and how do you see your career evolving over the next few years?

- “Why Anthropic? [Why do you want to work at Anthropic?] (We value this response highly - great answers are often 200-400 words.)”

- In one paragraph, provide an example of something meaningful that you have done in line with your values. Examples could include past work, volunteering, civic engagement, community organizing, donations, family support, etc.

- What’s your ideal breakdown of your time in a working week, in terms of hours or % per week spent on meetings, coding, reading papers, etc.?

- Please provide the link to a single commit that you find impressive, and explain why you find it noteworthy. You don’t have to be the author.

- What is a project or task you completed, that everyone around you thought was impossible?

- What do you optimize for in life?

- How intensely do you like working?

If a question is on the position application form it’s a good idea to save your reply so you can copy-paste if you’d like to apply to other positions of that company (e.g. Anthropic’s questions are largely the same).

Technical interviews

Coding

The exact place where you’d do the coding varies greatly: could be in your own ide whatever you prefer (usually asked to have ai hints disabled unless it’s an ai-assisted coding interview), in the Coderpad/Hackerrank/etc, in the colab notebooks, google docs and so on - be prepared for anything, hard to guess. You would often be asked to share your entire screen.

Leetcode

The basic algorithms & data structures problems (arrays/strings/trees/graphs/etc).

Meta for some reason really likes these interviews (so you have an initial technical screen + 2 of the loop interviews only for leetcode). But in most cases it would be a subsection within an interview (usually during 1st technical screen - can be 20-30-40 mins depending on the company)

Taking Meta as a standard the usual leetcode-only interview is 45min (2min intro, 2 problems to solve in around 15min/each ideally, 5 min questions).

preparation

If you are not too bad of a programmer you sure will solve the usual leetcode questions eventually (given sufficient time). The thing is to perform well you have to do it fast (<15 min per problem including all the right talking, thinking, sometimes - debugging, etc).

During my prep I mostly relied on a few articles & Grind75 list from tech-interview-handbook, so will just refer to the most important ones with extra comments sometimes.

There are 4 main things you need:

- be familiar with the most common problems being asked. Here I recommend checking how to make study plan and go over Grind75.

- I just went over Grind75 sequentially while saving problems I had any trouble with for later repetition (a convenient way to do so was to star a problem in leetcode which allows you to later jump at them in random order).

- After I was done with that I was solving random medium problems (excluding dynamic programming because it’s very repetitive and not actually asked in most cases).

- When you got your question solved fast enough the interviewer would usually pressure you to improve the solution (e.g. do smth in-place / non-recursively / etc or they’d upgrade a problem to a harder version). Or they would ask some questions at least, the laziest version of which is ‘what tests would you write for this function’. I don’t think there should be a separate practice for that but keep it in mind.

- As a minor trick - some problems in the lists are for paid leetcode users only. but you can just ask chatgpt/etc to give you the definition - works like a charm. (you can also ask chatgpt to review your produced code after, which is especially useful for the real interviews if you copied what you produced during that time)

- be familiar with your programming language of choice. In particular - some things you would likely never need outside of the interviews (like removing items from a list, really?). Also as part of that remember the most common data structures/algorithms & how they are implemented in your language of choice including complexities

- for python this is a small cheatsheet I used

- most likely you will figure out what you don’t know naturally by going over the Grind75/etc problems - so I highly recommend not to be lazy and go over the boring-looking problems which you think you know how to solve as well (see my example failures on that front)

- communicate as expected. Useful articles: how candidates are evaluated after the interview, the expected communication

- some suggestions from my experience

- check the conditions first (before you even hear the question) if the interviewer didn’t mention that (‘will we run the code or only with our eyes?’ ‘can I use the internet or something?’ first - really depends, second - usually no but sometimes yes. note: even if you’re allowed to use the internet you rarely actually should - ideally all should be covered by your preparation already)

- it is really, really, really important to (1)understand the question by asking clarifying questions or examples, checking some corner cases, etc (2)figure out your solution (ideally thinking out loud) before any coding (one example: I once said smth like ‘ok let me write this function’ meaning I just want to define the signature but it already rubbed the interviewer the wrong way and he was like ‘no let’s figure out what we need to do first’)

- check your solution & fix bugs before running/declaring you finished + explain complexity

- some suggestions from my experience

- practice

- I suggest reading how to solve problems once you mostly figured out the previous sections. and follow-up with a few mocks or self-mocks

or interviewing at companies you don’t like(I only used self-mocks for maybe 5-10 times on random medium problems)

- I suggest reading how to solve problems once you mostly figured out the previous sections. and follow-up with a few mocks or self-mocks

What wasn’t helpful:

- I originally joined my friends in solving ‘problems of the day’ on leetcode but they are mostly irrelevant, repetitive and generally just not smth that helps you in any way - so strongly recommend against

- (opinion but not a strong one) Meta recommends Blind75/Neetcode150 which are basically outdated versions of Grind75 which seems to be correlated more strongly with what you are likely to be asked

observations / specific examples

Overall I had 8 interviews with leetcode elements (3 at Meta + 1 Anthropic/Recraft/Spotify/Amazon/random).

Some of the questions I was asked in no particular order

- traditional leetcode

- Check if there’s a loop in a graph

- Deepcopy of a graph (with potential cycles)

- Find Least Common Ancestor in a tree

- Correct parenthesis sequence while removing minimum number of characters

- Check if string can be composed of words from vocabulary

- more applied / ml-related but still approached like leetcode mostly

- Rotate image on arbitrary angle

- Convert call stack trace from one format to another (sampling profiler)

- Implement sequence packing for llm training (

list[list[int]]where individual lists are of variable length ->list[list[int]]where each list is of sizemax_lengthcombining smaller sequences. ‘as optimal as you can’)

- Sometimes there is a warm up, e.g. “check if int number is full power of 7 (i.e. 7^n)”. Or for ML coding can be smth like “implement softmax”. Which you probably should do in 2-5 mins at most unlike the usual 15-20.

Now a few notes and observations:

- ‘rotate an image’ was smth I actually had to draw to solve it - so having a piece of paper next to you is a good idea

- for ‘LCA in a tree’ I initially suggested a more general solution I remembered which didn’t utilise that the tree had parent links everywhere. I adjusted for a more optimal solution but the point here is to be sure to notice specifics of a problem and don’t overrely on the algorithms you know

- ‘deepcopy of a graph’ is an example problem which I had seen on leetcode before the actual interview but was annoyed because “why should I solve it if there’s

copy.deepcopy”. And so I didn’t practice the problem. And during the interview I realised that I don’t remember how to get id of arbitrary python object which was sort of required to make a solution of a reasonable complexity. I ended up convincing the interviewer that “there should be a way to X but I forgot how to do it in python” and implemented the brute force and passed the interview in the end. But that was close - Sometimes you may impress your interviewer by picking a harder solution. I was pre-assigned a higher grade (Staff+ though I applied to Senior position) due to my other answers (non-leetcode) + ‘Wow I have never seen anyone solving the problem like this - people just go with the most basic solution’ (c) Interviewer. (I solved ‘loop check in graph’ with non-recursive dfs.) Funny thing, after the interview I realised my solution wasn’t entirely correct but tests passed and interviewer was happy. Not sure it’s worth it but you can try.

I failed 3 (out of 8) leetcode-like interviews:

- Amazon (underprepared). It was the very first technical interview across all companies so I was underprepared in general not just in leetcode.

- Recraft (underprepared for a high bar). They had somewhat harder-than-average questions + standards for passing. So my performance was objectively below average in the end. I think if I’d spend like 2x time preparing for leetcode (~80h instead of ~40) I would have passed easily.

- Anthropic (being tired). It was the very last of my interviews across all companies and I was (in theory) most prepared. Anthropic, sadly, replied very late so I already signed another offer and even started working in a new place. But I was curious, partially because of this blogpost outlining their approach. Unlike in the blogpost I found the pre-screen pretty easy and had plenty of time left (I had around 40mins for the last stage and just calmly finished 20mins before the deadline). So I passed the pre-screen and the follow-up recruiter call but on the technical screen with the engineer I struggled badly and barely produced a solution that I should have normally been able to do pretty easily (well, by my own guess). The thing is that week I worked like 80h and in particular on the day of the interview could be easily 10-12h before it. In contrast for the pre-screen I started in the morning on a weekend after a good rest and sleep. This was a really long way to say that your shape matters for the interview (most of the time I set important interviews around 10-11am and easier ones in the afternoon and later just studied & prepared for the next ones).

ML Coding

This is just the usual ML-related coding of some specific layers/losses, training loops, pre-post processing, etc. Some example questions below.

Implement layer/loss/function:

- (warm up) Implement softmax

- Implement contrastive loss fn

- Implement multi-head attention in pytorch

- Implement 2d positional embedding for attn

- Write a function to …. What if we want to use it as loss? (How to make it differentiable)

- (used to be asked) Write convolution / pooling layer

Pre/post processing, inference:

- Implement top-p for llm inference

- Implement BPE

preparation

Maybe it’s what I actually used to do a lot at work that I didn’t really prepare specifically for the coding tasks like this.

Yet I do recommend to go over the examples above and just implement them w/o reference.

In my case on the actual interviews I realised that I didn’t remember / didn’t know (because didn’t really need to use) some syntax (e.g. that @ actually can do batch matmul and there’s no need to call separate .bmm or whatever. or that there is smth like torch.masked_fill). einsums might be helpful as well. Mostly - similar advice to leetcode on knowing your language but now - knowing your main frameworks.

Practicing the examples would be probably sufficient. I did that to some extent (on what I was sure I didn’t know well - e.g. 3d RoPE for video generation models) but turns out it makes sense to do a broader set of things because you can discover where you are lacking.

observations

I had just 3 interviews with such questions (all passed) - in Perplexity/Moonvalley/Spaitial.ai. The questions were usually set for 30mins

Some observations

- the leetcode preparation traumatizes you - instead of multiplying matrices the first time I got a question from this section I almost started with for loops

- you should actually solve the problem instead of doing smth which ‘looks right’. I had a painful but important realisation during the ‘implement contrastive loss’ question. During this question it was allowed to use the internet to find the right paper/loss/etc (but not allowed to use llms). So I used SigLip2 as reference opening the paper and copy-pasting the loss w/o much thinking and pretended that it works. Without real understanding. After the interview I realised I was fundamentally wrong (what I implemented was bullshit that only from the first glance was right). The ‘funny’ thing is that I knew what a contrastive loss was and the formulas w/o any lookup - if I had spent like 30 seconds of time to remember and think I would have implemented it correctly and w/o any search. But I did a terrible mistake of deceiving (myself in that particular case) that what I copied from the paper makes sense (I think when I was checking, I was checking vs paper and not vs common sense)

AI-assisted coding

Do you even write the code yourself now? Anyway my last interview of that type in 2025 was in June so the way it works now might have updated a little bit.

I had only 2 such interviews but feels important to share as they should become more frequent. You share the screen and work as you usually do, can use everything. On one of the interviews I needed to call some ai models (llms/image/video/etc) in the project itself - for that the interviewer was working in parallel with me to give me api keys from anything (they’d sign up if they didn’t have the keys already).

Since the sample size is small I’d just tell how the interviews looked like:

The first one was a double-micromanagement (the interviewer was micromanaging me and I was micromanaging the AI).

- I was given a task but in small parts w/o knowing what’s next and what the entire thing is supposed to do. The mini-tasks were on a level of what could be achieved with 1-2 prompts

todaymid 2025 but you have to be smart about the prompt and the way you do it reveals your experience and decision making to some extent - The problem was some kind of data exploration + building simple heuristic solution on top. the data input ‘evolved’ as well as the interview progressed

- While very annoying to experience as a candidate the theoretical goal of the interview is to simulate real life environment when you learn the problem and what needs to be done gradually

The second one started with “To warm up - can you tell me about a recent project which you really liked?”. And when after your reply - “OK now please implement it in the time we have for the interview to the extent possible :)”.

- I was free to decide what exactly to implement (since the ‘whole project’ was obviously completely unreasonable in 1h)

- I somewhat got stuck at debugging towards the end of the interview but luckily found good enough workarounds to have a working prototype

- This interview I liked a lot as a candidate because you don’t waste time on understanding the context and are challenged to do what you did at some point but much much faster. From the interviewer’s perspective it’s a sanity check that the candidate can work fast & use the tools efficiently (and also it’s a lifehack to learn how other people work with the ai tools)

At the time I used ChatGPT + 2 custom extensions (for browser & for IDE) to copy-paste the entire project to it & load diffs by hotkeys and it was good enough. Now I would use just claude code (or whatever new tool would be dominant at the time of the interview)

Homeworks

Since we touched on AI coding the homeworks feel like the right thing to put here (because you can officially do absolutely whatever while doing them).

I was given 3 homeworks with 4-6 hours time recommended to spend on each. I guess no one checks the time constraint but if your solution is too sophisticated it’d be obvious. The ‘task’ was just some files sent to you and once you finish you send your solution back - not as some external system which only gives you N hours and that’s it. I actually did each of them with large breaks which helps to think and come up with ideas in the background. Which may or may not have been a violation.

The first was to train a diffusion model on 2d keypoints dataset (.zip archive). Since the data is low-dimensional the training is fast (minutes). You are provided with some code to load the data but other than this you are to implement anything as you wish and you don’t have to use the original code either. The Readme of the problem was telling to focus on quality (code quality in particular) and documentation (‘how you approach the problem and visualize/evaluate results’). I got the results which were ‘ok’ visually (end business problem was not provided but the generated outputs were looking plausibly good and somewhat diverse) and spent the rest of the time on documentation/cleanup. We had a discussion of the homework (and extra questions) during the next interview.

The second homework (again ‘write code for diffusion model training/inference on mnist’ + some domain-specific extra on top) was a university-style colab notebook with sections you should fill but some boilerplate/structure already provided for you. For whatever reason my results were a bit crappy but more or less working on all sections so it was good enough. I also did flow matching instead of a standard diffusion and was asked why later to which I said it is just straight up better and simpler - why bother with sqrt and whatnot

The third one was to create AWS endpoint for hosting some of the recently released google models for inference. I realised I didn’t want to be in that position (more based on other signals but the homework strengthened my feelings) and decided not to do it - so the homework served its purpose in a way

ML Theory & Practice

ML Depth/Breadth

Questions to reveal your theoretical knowledge of different things (e.g. transformer architecture, batch norm, kv-caching, regularization methods, etc). Usually a small subsection/part of the interview (of almost any technical one except pure leetcode). ML design and to lower extent ML coding usually expose your knowledge of these as well (but indirectly/unprompted).

In terms of format it would almost always be an oral discussion with an exception of ‘compute number of parameters of self-attn/conv/etc layer’ kind of questions where you are entering replies into the chat section of the meeting.

I noticed there are 2 main ways to ask a question (w/o going to ML design)

Direct question

“What is X” / “Tell me about Y” / … - you are explicitly asked about a very specific thing and you need to tell exactly about it.

Examples from the interviews:

- How batch normalization works?

- Is sigmoid used often? Why? Where used?

- Can you explain what diffusion is?

- Explain from a high level point of view how a new token is generated from a sequence which contained 10 tokens?

Usually easy for the candidate (unless you somehow have no idea at all which is rare).

Overview

The question forces you to reveal your knowledge on the topic w/o asking smth very specific.

Examples from interviews:

- Tell me ALL regularization methods you know.

- What kind of bottlenecks you might face when you are running inference on an LLM?

- Your loss is NaN after a few epochs - what can be the reason and how would you debug it?

Harder for the candidate because you don’t have that anchor. Easy to forget smth especially if you’re not very familiar with the topic. Good question for the interviewer to find experienced candidates.

The need for an overview is also likely to arise in more active and more narrow ML Designs, where the interviewer would ask not only ‘how would you X’ but explicitly ask what are the different ways to do that (and what you would prefer and why). It might make sense to give light overviews in ML design per section anyway (unprompted) but more on that later.

How to reply

I realised it somewhere in the middle of the interviews that you can reply very differently even to the ‘simple’ questions.

Let’s say you’re asked “How batch normalization works?”

You can:

- (1) ‘skip through’ the question showing superficial knowledge (e.g. tell how the operation works and over which channel the aggregation happens)

- to which the interviewer would likely ask a few iterations of probing questions (e.g. ‘does it behave similarly in train/test?’)

- what I realised at some point is that you don’t have to wait for it and can just demonstrate +- the entirety of your knowledge (high level at least) - see below

- (2) ‘give a full overview’ - basically reply to any question as if it were an overview question, giving as complete a picture as possible to demonstrate your knowledge/experience on the topic

- in case of batch norm you can (unprompted) after the basic definition explain any or all of: why use it at all? train-test discrepancy and ways to fix it; where to put in the architecture; that you can merge it with linear to speed up inference; alternative normalization layers and when to use vs each of them; distributed training issues/nuances with it, etc

- note that a good interviewer would still be able to ask you even deeper questions (e.g. for batch norm I was asked ‘how would you implement sync batch norm’ - which I didn’t know how so I tried a lazy ‘I would use syncbn() and so it’s just auto-synced’ - and getting pressed further came up with ‘can transfer mean & mean^2 from each node’ (the problem is std ofc so if you pass stds estimate will not be precise)) - so be ready for that

- I feel like that’s the right/better way to answer a question as it shows your interviewer what you actually know a lot faster w/o playing back and forth questions

- in case of batch norm you can (unprompted) after the basic definition explain any or all of: why use it at all? train-test discrepancy and ways to fix it; where to put in the architecture; that you can merge it with linear to speed up inference; alternative normalization layers and when to use vs each of them; distributed training issues/nuances with it, etc

Now an overview question forces you to give a reply closer to (2) but maybe all questions should be addressed in that fashion by default (unless your interviewer doesn’t like it for some reason - which never happened to me).

One more thing: your knowledge should be strongly embedded into your memory because sometimes you may forget minor ‘unimportant’ details when answering another question (in ML Depth or ML Design). As an example: during an ML design interview with Deepmind (question was smth alphafold-like for molecules which I’m not that well aware about) I was asked among the other things about architecture (transformer-something in my solution) and I didn’t even remember I need to mention normalization layers because I was focused on the other things (if prompted - I of course would have replied. in fact on the interview directly prior to that one I was asked to explain ‘transformer architecture’ where I described it as well). This may give the interviewer more signal on how much you worked with something and how many things connect in your head I guess - not sure there’s a way to deliberately train such more distant connections but there might be.

How you can prepare for Deep Learning specific things

I spent nearly all my time on deep learning related things. I listed roughly what I needed to cover and gradually expanded the list and the notes

- individual neural network layers: attention mechanisms, embeddings, normalization layers, activation functions, weight initializations, convolutions, pooling, dense, up/down sampling methods, loss functions, efficiency tricks

- blocks (combining layers) & architectures (combining blocks)

- fundamental approaches (Diffusion/Autoregressive/NeRFs/Splats/…)

- training & fine tuning (loras & what to finetune / RL methods / large scale training / training tricks / …)

- inference optimization (pruning/quantization/spec dec/distillation/…)

- applications & domain specific: text / image / video / audio & speech / 3D / …

I would just go deep into some topics until my curiosity was satisfied and I felt some sense of clarity. I’d add the new things I’d have to check later to the doc as I was noticing them. Probably the most helpful part was just reading the latest significant papers (at the time of my preparation - DeepSeek V3/R1 and Wan 2.1 technical reports - they are very long and dense with important stuff described in sufficient detail). It is probably sufficient to read and understand just 1 such paper per domain to figure out +- everything about its state of the art. It will take days (reports I mentioned were around 60 pages each) but I feel like it’s worth it.

I likely spent about 100 hours or more on this because I like that aspect the most. It was however almost never asked in such detail. So if you only need a quick pass maybe just revise the base layers / losses / metrics and check the latest significant paper/report for a domain relevant to your position quickly - which combined might be around 20 hours at most?

You can find my not-so-well-structured-or-completed notes here (composed around May 2025).

ML Design

Formats

This one is the least standardised and can be very different across companies and positions.

The only shared thing is that in some form you should explain ‘how would you build [some ML system]’.

Differences:

- Scope. There are generalist vs specialist positions so similarly you may be asked/expected to provide either just about everything but more superficially (refining the problem requirements, choosing metrics, evaluations, data, features, model, training, validation, baselines, a/b, monitoring, etc) or smth very specific (e.g. a DL model architecture and a little bit of data/training)

- (Expected) level of involvement of your interviewer in the conversation. The ‘canonical’ way is to give ~1-3 sentences of the problem and reply to some of your questions afterwards (the less the interviewer talks - the better). But in reality it is often very conversational (well, in my case at least interviewers were participating more actively - I passed most of these and it didn’t look like a bad sign). Maybe a ‘perfect answer’ (which likely doesn’t exist) wouldn’t require the interviewer to talk but in reality even at my interview at Meta the interviewer talked too much (to the extent I was trying to shorten replies since it’s hard to finish the story because of the time bounds)

- Format - can be excalidraw/google doc/figma/miro board or just oral discussion. The first 2 are happening more often than others. Also you might be presented with different inputs already to not start from scratch (context about environment/existing system/etc). Or it can be Reverse ML Design where you’d review (a very bad) ML Design doc from a ‘junior colleague’. Most of my interviews here were 45mins (some - 1h).

Examples (from actual interviews):

- Recommendation system for places (a few types of places, realtime)

- Recommendation system for 1 song on main screen after login

- Recommendation system for one specific physical event

- Personalized recommendations of starting questions user sees on AI chat app

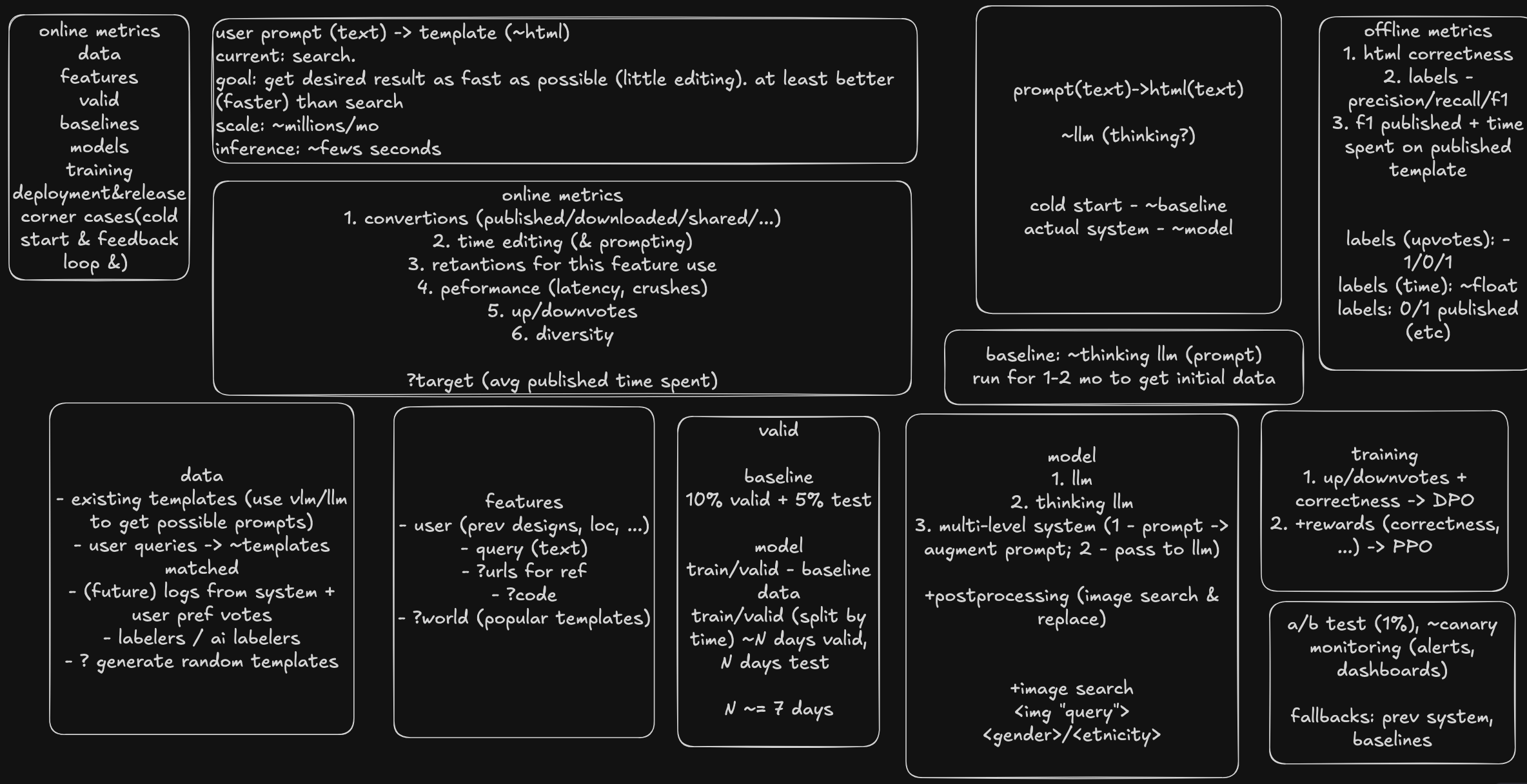

- Prompt to html-like template generation system with layers and images

- Prompt to arbitrary charts/visualizations system based on some internal data (sql db)

- Adapter for object consistency on top of foundational image generation model (given a reference image of an object + prompt - produce the same object in a different setup) - with explicit prompt to focus on architecture (and a bit of data/training)

- For a pair of molecules represented as strings of text predict some property

- Predict user activity in the app (will / will not be active at some intervals of time, e.g. hourly)

As you can see it is mostly either some recommendation system or some way around foundational model customization

prep

Context: I had 0 ML design interviews before that in my life (maybe 1-2 but I couldn’t even remember) so it was pretty much preparing from scratch.

My prep

- Machine Learning System Design: With End-To-End Examples - good enough to cover all areas of ml system design on a high level (you likely know some specific sections better but lack in others - in my case at least)

- Looked for videos with example full interviews (what is considered good) and didn’t find any I’d consider as such… (the interveiwees usually fail on lots of aspects of the interview).

- Deciding on the structure of the reply (see example on the image below)

- For specific companies / positions - some research on how to do things which might be asked, in particular looked more on recommendation systems since I wasn’t building them previously or sometimes got a hint from a recruiter on rough tasks like ‘usually something like text classification’ (which would turn out to be smth completely different in the end most of the time - but helps your grounding a bit)

- Mocks / self-mocks (a few self-mocks first to feel like I make sense at all -> mock -> more self-mocks)

- I tried to feed llms of the time (mid 2025) my self-mocks or actual interview replies but they weren’t very helpful - maybe it can be better now but the main issue they’d try to feed you outdated solutions which should no longer be applied. So using your own or other humans’ judgement was a very important part

- For mocks it’s a good idea to ask your connections who recently passed such interviews (especially into the company you are applying to)

Regardless of how exactly you decide to prepare - STRUCTURE is the most important - you need to tell a smooth well-connected story. Practice (mocks/self-mocks) is likely the most helpful way to get there.

My structure towards the end of the interviews

I ended up replying with smth like the screenshot below accompanied with a lot of talking. During the interview I placed all the sections in 1 screen so it’s easy to keep everything in front of me & the interviewer. I was just making the blocks as the story goes while covering them. Sometimes I might have returned to the previous ones if I’d suddenly remember smth (but ideally you shouldn’t do that).

In terms of the order:

- understand the problem (why, constraints, scale)

- at the beginning you should understand the problem and ask clarifying questions. while asking questions a good way is to make assumptions (e.g. not ‘how many users we expect?’ but ‘[company] has roughly 10M DAU so for this system it’d be probably 10% of that, does it sound about right?’. the company numbers are smth for you to look before the interview)

- it’s important to figure out

- why you are doing the system (what exactly is needed?)

- is there an existing solution and why it’s not sufficient (the second part is usually obvious but in some cases not)

- constraints/limitations (interpretability? diversity? model size [e.g. running on mobile]? etc). Feels like an important thing for a real ML design but on the interview I mentioned it a few times and interviewers would always say these things are not a concern. So unless the problem screams about it either skip or do a short mention to demonstrate you’re aware about such things (‘it seems like we don’t have special constraints like interpretability or diversity here or at least let’s leave it out of the scope of the interview’)

- maybe a useful thing is to ask is which platform you’re developing for (or all). on mobile the screen size is smaller and fewer items would be shown which most likely should affect the choice of your metric. you can ask if it’s mobile or not (the world is on mobile now mostly) and comment why you are asking. more generally ux may affect your system intended behaviour/priorities (but it’s probably not wise to discuss it in detail or think about it - you don’t have much time)

- scale (total users, requests/s, inference time per request, etc). note that requests/s has spikes

- corner cases (usually cold start & feedback loop). To me it feels like smth you should put into the doc so you will not forget about it later (or that you at least mention them if the interview is not running smoothly and you won’t have much time to comment on that).

- after that things are more straightforward and the interviewer is a lot less active (almost dormant)

- online metrics. once you understand the problem it’s important to figure out how to evaluate your system. list what feels like easy and important to collect about your system and then figure out the target metric (think & suggest and ask your interviewer to confirm). ideal target metric is probably connected to money but it’s not always easy and natural so don’t try too hard on that aspect. sometimes the target metric would be one thing but under the constraint of another thing not going over some threshold

- data. just list any raw sources useful. prev solution? some company data (which)? can you scrape from the internet? buy? public datasets (unlikely helpful)? can you generate the data? would human labeling help? the previous ones would help you get off the ground but after - which data you collect which would help your system to improve?